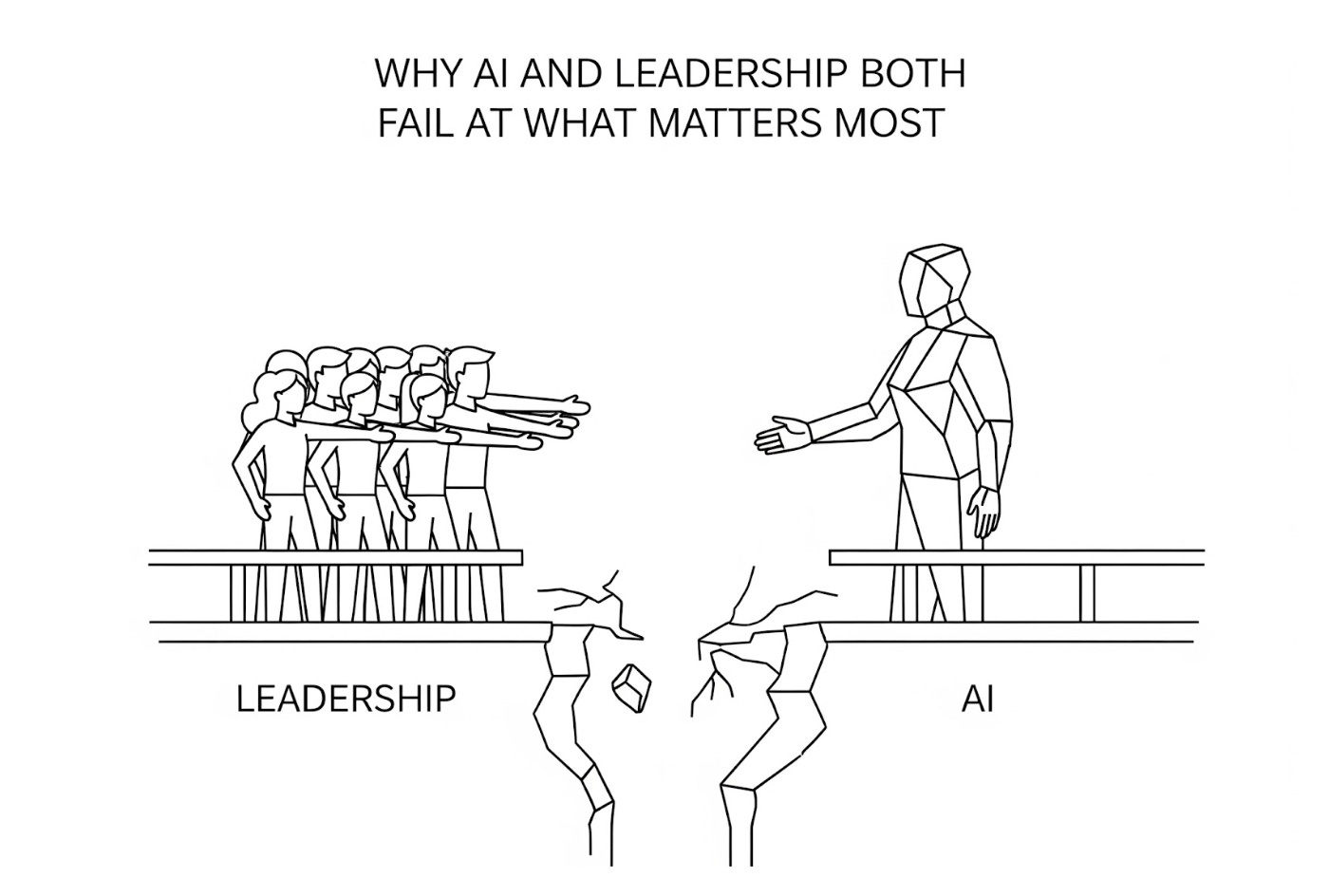

The Causality Problem: Why AI and Leadership Both Fail at What Matters Most

Hope springs eternal in the minds of many investors and business leaders that artificial intelligence will soon be able to solve their most pressing concerns. Yet both AI and corporate leadership suffer from the same fundamental blind spot: a failure to understand the causal relationships that drive real outcomes. This lack of clarity is what creates a “causality gap,” a void in decision-making that makes us blind to how our chosen actions actually create results.

At Stealth Dog Labs, our research into this “causality gap” has revealed a clear connection between how decisions are made within an organization and the financial consequences that arrive as a result, sometimes as far as eighteen months into the future. Unfortunately, for most organizations, it is precisely this time delay that hides the inherent causal relationships that determine the success or failure of decisions. And because traditional measurement approaches focus purely on outcome rather than the decision-making processes that create them, these variables might as well be invisible.

Yet, at Stealth Dog, we can measure how an executive’s risk tolerance patterns correlate with cash flow variability eighteen months later. We can quantify how planning horizon preferences predict strategic consistency over multi-year periods. We can identify cognitive biases that create predictable operational blindspots. We also can see why stocks are range bound and in decline. And, in a few cases, we can see why harmonious companies consistently grow with little downside.

Can AI do this? No.

AI’s Limitations, According to Its Godfather

Yann LeCun is one of the most influential figures in artificial intelligence and deep learning. In 2018, he won the Turing Award (the “Nobel Prize of Computing”) for his work on deep learning. He is considered one of the “godfathers of AI.” And he is currently serving as Chief AI Scientist at Meta. But he is also known for his strong opinions on the limitations of AI, which reveal an even deeper problem that extends beyond AI into the realm of executive decision-making.

For example, LeCun argues that while current AI systems can manipulate language brilliantly, they still lack what he calls “an understanding of the physical world.” In other words, they fail to grasp cause-and-effect relationships that exist beneath surface patterns. LeCun goes even further in suggesting that the language complexity we perceive when using AI is actually an illusion created by the machine’s ability to manipulate a finite set of linguistic symbols. This means that the machine can produce compelling text that appears intelligent, yet it still fails to understand the causal structures that create meaning behind those linguistic symbols.

According to LeCun, truly intelligent systems need persistent memory and the ability to reason about abstract goals. That means, in order for AI to reach the lofty goals set for it by the PR machine, it would need to understand the underlying structures that give language meaning instead of simply processing the language itself. It would also need the ability to connect actions to consequences, or a deep understanding of causality. This is something AI intrinsically lacks, with LeCun calling for “world models” that might help the systems simulate consequences and plan effectively.

Yet what LeCun does not seem to realize is that this “causality gap” is prevalent in the real world too.

Dysfunction Awareness (or Lack Thereof)

Similar to AI, many executives have mastered the language of basic business, financial modeling, strategic frameworks, and market analysis while completely missing the causal relationships between their decisions and eventual outcomes. As a result, roughly 22% of organizations today are in full zombie mode, while 62% are under duress. These are organizations that have optimized for quarterly metrics without understanding the abstract complexity behind their decisions. Instead, executives of these companies remain unaware of their own decision-making blind spots, cognitive biases, or even the downstream effects of their choices on organizational performance.

AI mirrors this lack of self-awareness, as it cannot recognize its own biases and limitations either. Instead, these systems produce confident-sounding responses even when operating outside of its competence, creating an illusion of capability that masks fundamental dysfunction. And it is this invisible dysfunction— whether human or machine —that creates compounding problems which often become visible once it’s too late to course-correct effectively.

To avoid these issues, leaders must gain self-awareness about their inherent decision-making patterns and how these patterns impact systems and people over time. Such knowledge is key to successful business operations and profitability, but it’s something that AI fundamentally lacks.

The Missing Layer

When business leaders fail to understand their cognitive biases, risk preferences, and blind spots, they make inconsistent choices that create organizational confusion and strategic drift. The remedy to this deficiency is what we call “mindset types,” or action-logics. These are a set of metrics derived from language which are causal to decision-making, reflecting both underlying values and intuitive dimensions, such as risk tolerance and worldview. This “invisible” information is what actually guides our decision-making at the subconscious level across different contexts. But without this layer of depth and understanding, both human leadership teams and AI struggle. The former with ineffective decisions; the latter with incoherent responses. And both are bad for business.

The most effective business leaders inherently understand this dilemma. That is why they actively seek out “causal intelligence,” hoping to discover the underlying cause-and-effect relationships that remain mostly hidden beneath surface-level correlations. Moreover, they understand that their own personality traits and decision-making patterns create ripple effects throughout the organization that are inevitably revealed by financial performance. But for the first time, this can be measured for optimal success—not with AI, but with Stealth Dog Labs.

The Path Forward

Both AI development and leadership effectiveness require the same fundamental breakthrough: systems that understand causality rather than just the ability to process information.

For AI, this means developing world models that can simulate consequences and plan hierarchically. For leadership teams, it means a departure from traditional performance systems built to capture lag indicators (what happened) rather than lead indicators (what’s causing future performance). In both cases, the result is a far deeper understanding of causality, as well as the metrics behind the metrics. It means looking beyond linguistic façades or symbolic manipulation to discover how choices actually create results. And it means a greater appreciation for how our choices actually effect our financials—before it’s splashed across a quarterly report.

As of this writing, AI has not achieved this level of sophistication or intelligence. But for clients at Stealth Dog Labs, causal intelligence tools are providing the competitive advantage they need to not only survive in this uncertain landscape but actually thrive. And it didn’t result from a prompt or a hack or AI hype. Their results were created by knowing where and how to look.

Ready to solve your “causality gap?” Contact us.