The Intelligence-Quality Convergence

Why Yann LeCun and Edwards Deming Point to the Same Solution

The Pattern Hidden in Plain Sight

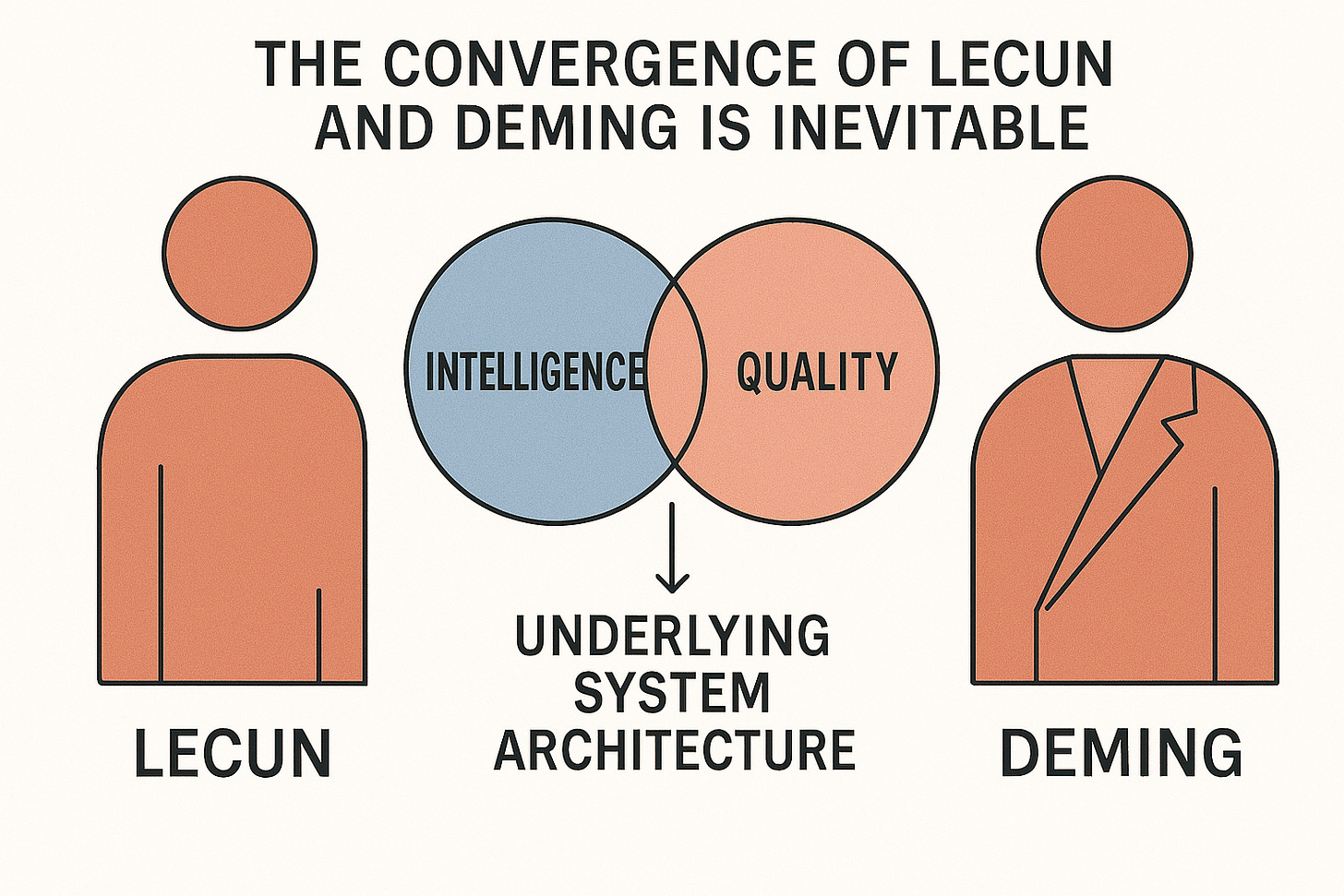

Yann LeCun’s four requirements for true intelligence and Deming’s 14 Points for quality management are describing the same fundamental architecture that determines whether any system—human or artificial—can function effectively.

LeCun’s Four Intelligence Requirements and Their Deming Parallels

1. Understanding the Physical World

LeCun: AI systems must comprehend physical reality and cause-effect relationships.

Deming Connection: Bad leaders operate in abstract bubbles, disconnected from the actual work being done. They make decisions based on spreadsheets rather than understanding how their choices impact real production, real people, and real customer outcomes.

2. Persistent Memory

LeCun: Intelligence requires maintaining relevant information across time and contexts.

Deming Connection: Effective quality management demands institutional memory, learning from past improvements, maintaining process knowledge (what Deming would call Profound Knowledge), and building on accumulated wisdom rather than restarting initiatives every few years.

3. Reasoning Capability

LeCun: True intelligence involves logical analysis and inference from available information.

Deming Connection: The 14 Points collapse without leaders who can reason systemically, understanding how relationships affect quality, how fear impacts innovation, how training investments compound over time.

4. Planning Ability

LeCun: Intelligence requires projecting future scenarios and developing strategies to achieve goals.

Deming Connection: “Constancy of purpose” is fundamentally about planning, long-term strategic thinking that transcends quarterly pressures and short-term optimization.

The Psychological Bottleneck

Here’s where both frameworks reveal the same critical insight: you cannot train these capabilities into systems (human or AI) that lack the foundational architecture to support them.

Consider the historical pattern of dictatorial leaders, they consistently exhibit misdirected empathy, or what I would call predatory empathy, they tend to be opportunistic in nature, as well as a number of other characteristics that separate them from other types of leaders.

These psychological patterns make it impossible to execute either LeCun’s intelligence requirements or Deming’s quality principles. You cannot teach planning to someone whose psychology drives short-term opportunism. You cannot teach systems thinking to someone cognitively trapped in self-protective patterns.

So, where is the line in the sand? And what is your ability to quantify this flaw?

The Human-AI Interface Problem

As organizations integrate AI systems, they face a new challenge: the quality of human-AI collaboration depends on whether human leaders meet the same intelligence requirements that we’re building into AI systems.

If your AI system can reason systematically about cause-effect relationships, but your human leaders make emotional, reactive decisions, the interface fails. If your AI maintains persistent memory about process improvements, but your leadership changes strategy every quarter, the system cannot function coherently. Essentially, the two systems are interlinked. And if you’re not measuring both systems correctly, then you’re not likely to see the improvements you hoped for.

The Measurement Imperative

Both LeCun and Deming point to the same solution: standardized measurement across entire ecosystems.

For Deming: Measure psychological capabilities across suppliers, leadership, and customer interfaces. You cannot implement quality management with leaders who lack the mental architecture to sustain it.

For LeCun: Measure intelligence capabilities in both human and AI components of your system. The weakest link determines overall performance. How these systems integrate tells you everything—but you cannot measure it on just the visible metrics alone.

The Integration: Measure using the same framework across human and artificial intelligence components. Use remote, unbiased systems that evaluate actual decision-making patterns rather than stated intentions or credentials.

This is the system I built; but when I started, I never imagined that AI would have a personality in many ways similar to that of an organizational team. While I’ve spent quite a bit of time measuring how different teams get along and how they should work together, introducing AI as a personality must become the next person in the room to be measured for harmony within an organization.

Beyond Surveys to Psychological Reality

Traditional approaches fail because they rely on defective measurement and reporting systems. A leader can articulate perfect planning principles while psychologically being incapable of executing them. An AI system can pass a reasoning test while still fail in real-world applications.

The solution requires measuring the patterns of psychological traits., mindsets and dysfunction.

The Convergence Point

LeCun’s intelligence framework and Deming’s quality principles converge on the same insight: effective systems require foundational capabilities that either exist or don’t exist in their individual components.

You cannot train intelligence into AI systems that lack the basic architecture. You cannot train quality management into leaders who lack the psychological capacity. You cannot create effective human-AI collaboration without measuring and matching these fundamental capabilities.

Implications for Organizational Strategy

Stop assuming training solves capability gaps. Whether human or artificial, some systems simply cannot execute certain functions regardless of programming or education.

Start measuring foundational architecture. Before investing in quality initiatives or AI integration, measure whether your human and artificial components have the basic intelligence requirements to make them work.

Build ecosystem-wide standards. Use the same measurement framework across suppliers, leadership, AI systems, and customer interfaces to ensure coherent operation.

Do not impact what you are measuring. Translation, avoid bias across the entire ecosystem, otherwise the data is unreliable. (We ensure this with remote measurement.)

The Bottom Line

The future belongs to organizations that recognize human and artificial intelligence as a measurable, predictable architecture rather than an assumed capability. Those that measure and match these requirements across their entire ecosystem will achieve sustainable competitive advantage. Those that don’t will continue experiencing the same implementation failures regardless of methodology sophistication.

In other words, if you’re not measuring then don’t expect to find causality.

The convergence of LeCun and Deming is inevitable. Intelligence and quality are different expressions of the same underlying system architecture. Organizations that understand this will build the future. Those that don’t will become case studies in what doesn’t work.